MLOps

•

Benchmarking ML APIs

Comparing FastAPI and Crow.Cpp for serving ML models.

Introduction

In this technical writing, I would be comparing the performance of prediction models served with Crow.Cpp and FastAPI.

In this comparison, I would be comparing the performance of these two API frameworks both in terms of latency and throughput. I will not be comparing the ease of development or deployment, but rather focusing solely on the performance aspect.

FastAPI is a modern Python API framework which is designed with speed of development and execution in mind. While Crow.Cpp is a C++ micro web-framework for creating HTTP or Websocket web services.

The metrics I will be measuring include:

- Latency: The time taken to process a request and return a response.

- Throughput: The number of requests that can be processed per second.

- Resource Utilization: CPU and memory usage during the tests.

- Error Rate: The percentage of requests that resulted in an error.

Testing Summary

TL;DR: Crow.Cpp performed 50% better than FastAPI in terms of throughput, while both frameworks had similar latency and no errors were recorded.

- Crow.Cpp can handle almost 50% more requests per second than FastAPI can handle.

- The average response time is about the same for both frameworks.

- The min response time is almost instant for both frameworks.

- The max response time of Crow.Cpp is 50% lower than FastAPI.

- No errors were recorded for either framework during the tests.

Experimental Setup

Environment Specs

- OS: Pop!_OS 24.04 LTS x86_64

- CPU: AMD Ryzen 9 7900 (24 threads) @ 5.49 GHz

- RAM: 64GB DDR5 @ 6000 MHz

API Implementation

There is only one endpoint for both APIs, which is the /predict

endpoint. This endpoint accepts a POST request with a JSON payload

containing the input data for the ML model. The API then processes

the input data, makes a prediction using the ML model, and returns

the prediction in the response.

Testing Methodology

To measure the performance of both APIs,

I use Locust to simulate concurrent

users making requests to the /predict endpoint.

For the tests, I simulate 200 concurrent users making requests to the API for a duration of 5 minutes. The input data for the ML model is randomly generated for each request to ensure that the tests are realistic and not biased towards any specific input.

Results

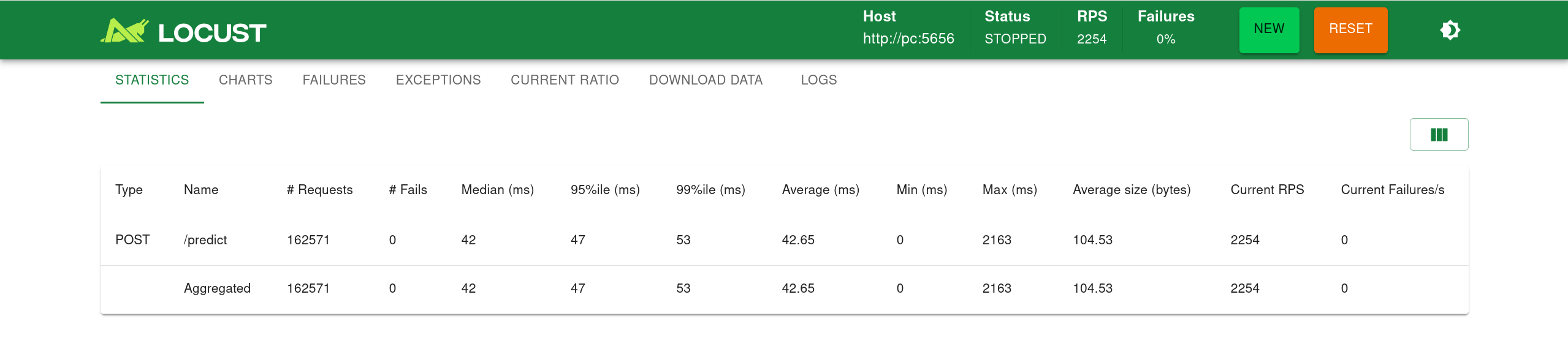

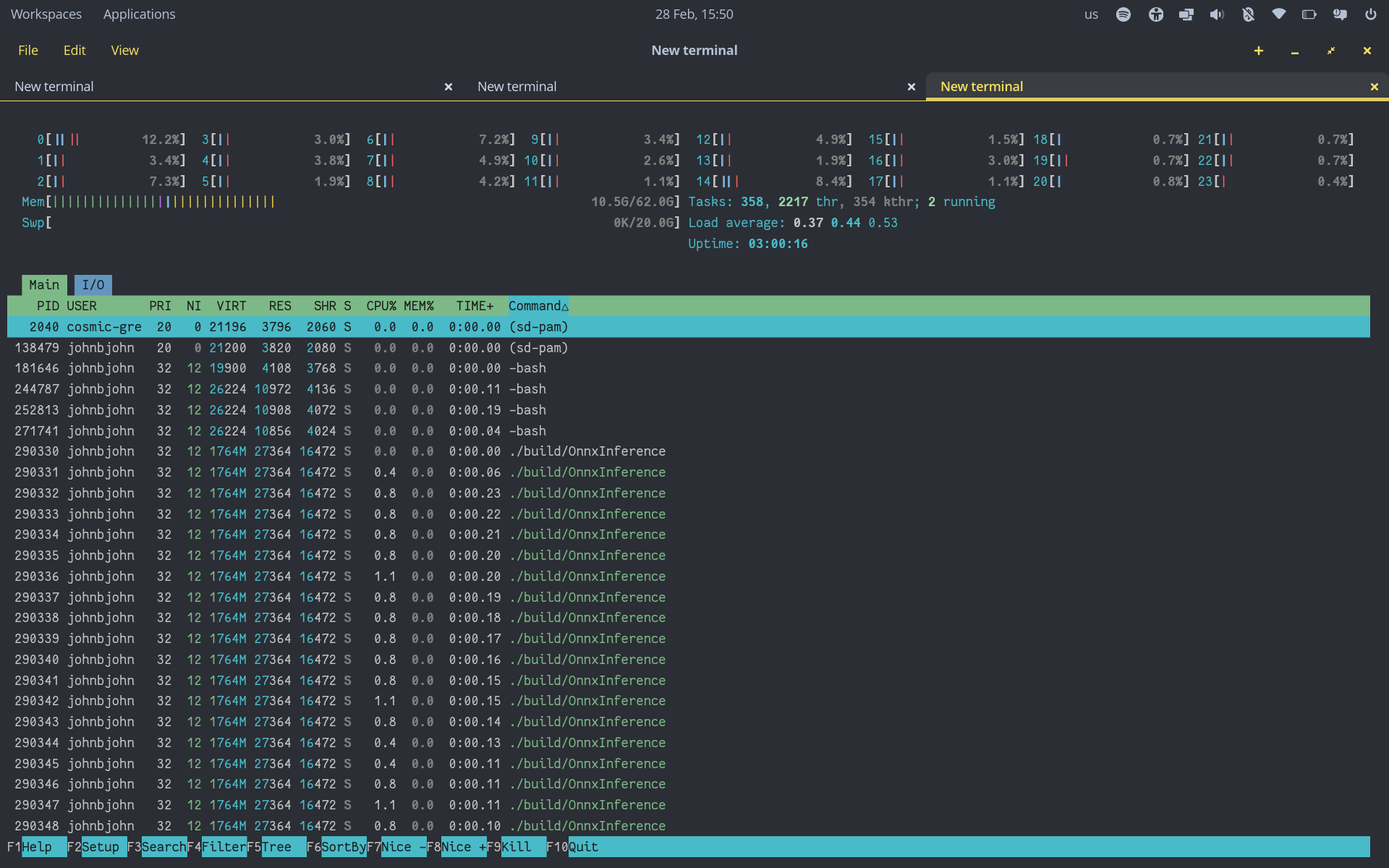

Crow.Cpp

- Avg. RAM usage: 27 MB

- Avg. CPU usage: ~1%

- Thread count: 24 threads (fully utilizing the CPU)

- Min response time: 0ms (almost instant)

- Max response time: 2163ms (2.163 seconds)

- Avg. response time: 43ms (0.043 seconds)

- Avg. RPS: 2200+ requests per second

- Error rate: 0%

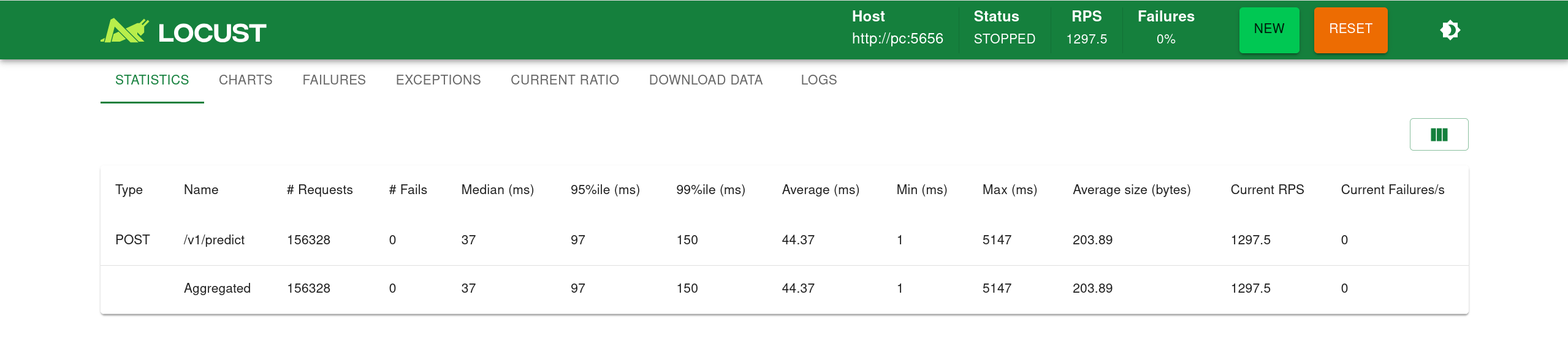

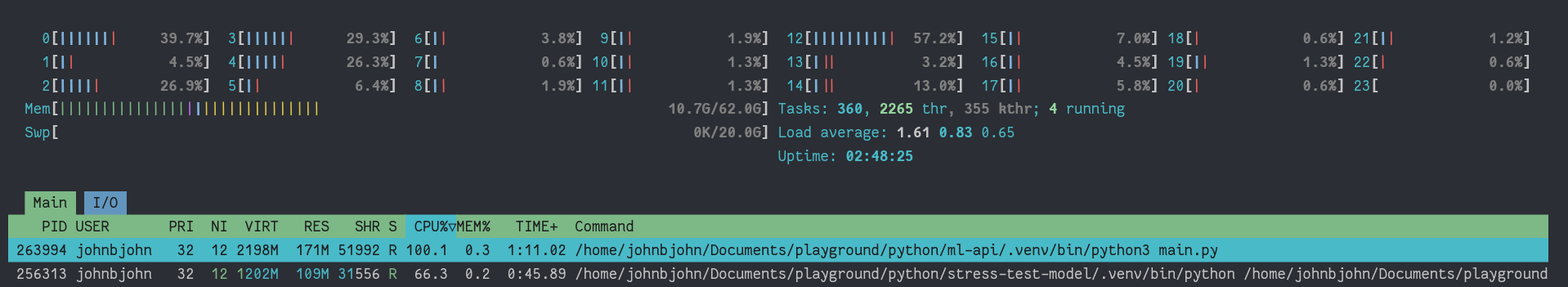

FastAPI

- Avg. RAM usage: 171 MB

- Avg. CPU usage: ~100%

- Thread count: 1 thread (Python’s GIL)

- Min response time: 1ms (almost instant)

- Max response time: 5147ms (5.147 seconds)

- Avg. response time: 44ms (0.044 seconds)

- Avg. RPS: 1200+ requests per second

- Error rate: 0%

Analysis

Crow.Cpp outperformed FastAPI in terms of throughout, handling almost 50% more requests per second than FastAPI. This is likely due to the fact that Crow.Cpp is a C++ framework, which can take advantage of multiple threads and the full power of the CPU, while FastAPI is a Python framework that is limited by the Global Interpreter Lock (GIL) and can only utilize one thread.

Since Crow.Cpp could utilize all 24 threads of the CPU, it was able to handle a much higher number of requests per second. In addition to that Crow.Cpp uses way less RAM than FastAPI.

However, the speed and efficiency comes with a cost: you have to fight with C++ and its complexities, while FastAPI offers a much more user-friendly and developer-friendly experience.

With little to no configuration, FastAPI can be up and running and handles 1200+ requests per second, which is more than enough for many applications.

Conclusion and Recommendations

Use FastAPI if you want a quick and easy way to serve your ML models with good performance and don’t want to deal with the complexities of C++.

Use Crow.Cpp if you need the best possible performance and strict resource constraints are a concern, and you are comfortable with C++ development.